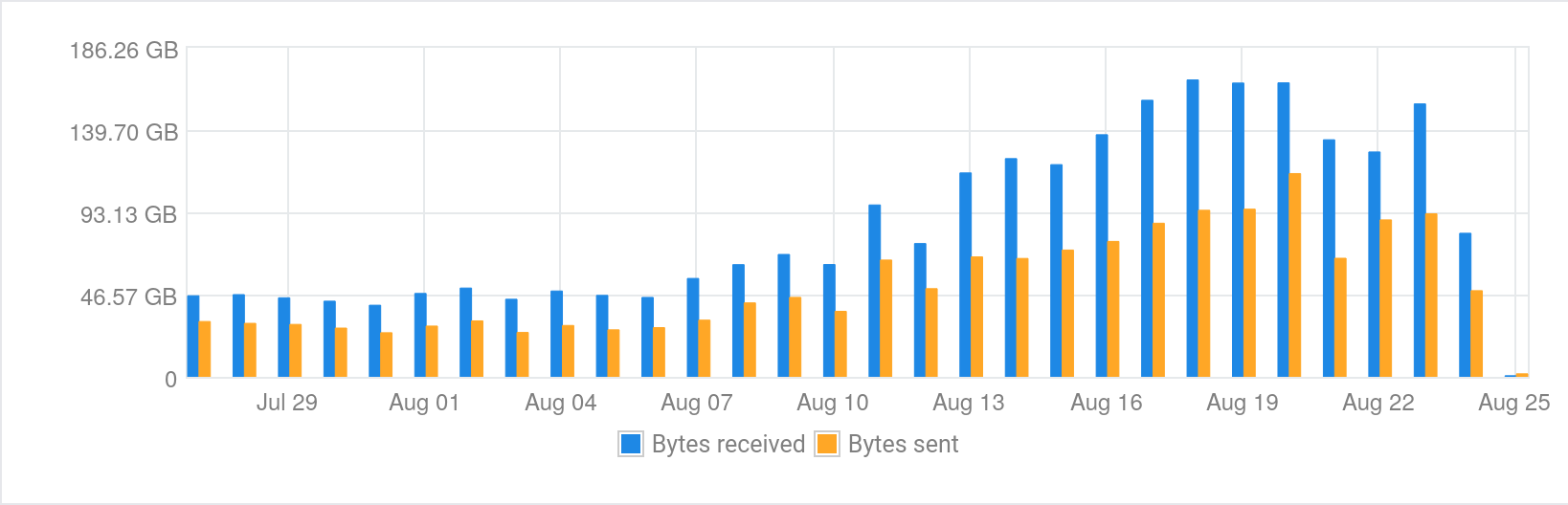

On 24 August I received an email from Vultr saying that my server had used 78% of its 3Tb bandwidth allocation for the month. This was surprising as last time I looked I only used a small fraction of this allocation across the various things I host.

After some investigation I noticed that the Nitter instance I set up six

months ago at nitter.decentralised.social seemed to be

getting a lot of traffic. In particular it seemed that there were several

crawlers including Googlebot and bingbot attempting to index the whole site and

all its media.

Nitter is an alternate UI for Twitter that is simpler, faster, and free of tracking. I mainly set it up so that I could share Twitter links with my friends, without them having to visit Twitter proper. It’s obvious in hindsight but a Nitter instance is basically a proxy for the entirety of Twitter so any bots that start crawling it are basically trying to suck all of Twitter through my little server.

Nitter doesn’t have a robots.txt by default so the first thing I did was fork

it and add one that blocked all robots. Unsurprisingly this

didn’t have an immediate impact and I was concerned that if the traffic kept up

I’d hit my bandwidth limit and start having to pay per Gb thereafter.

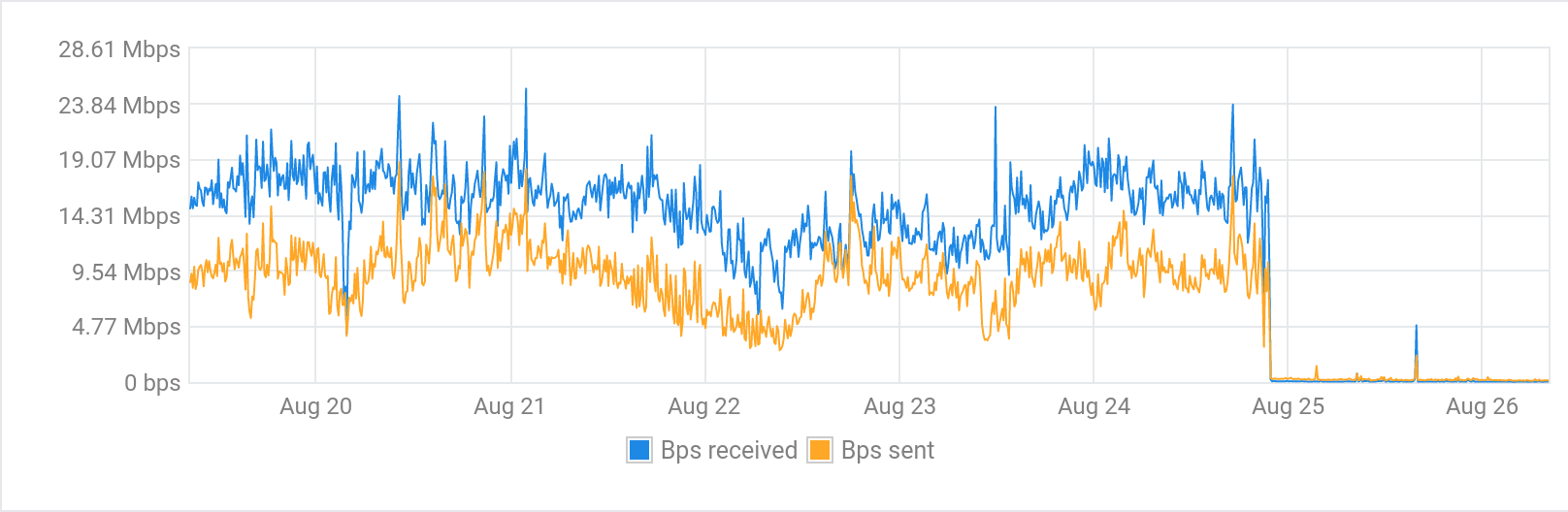

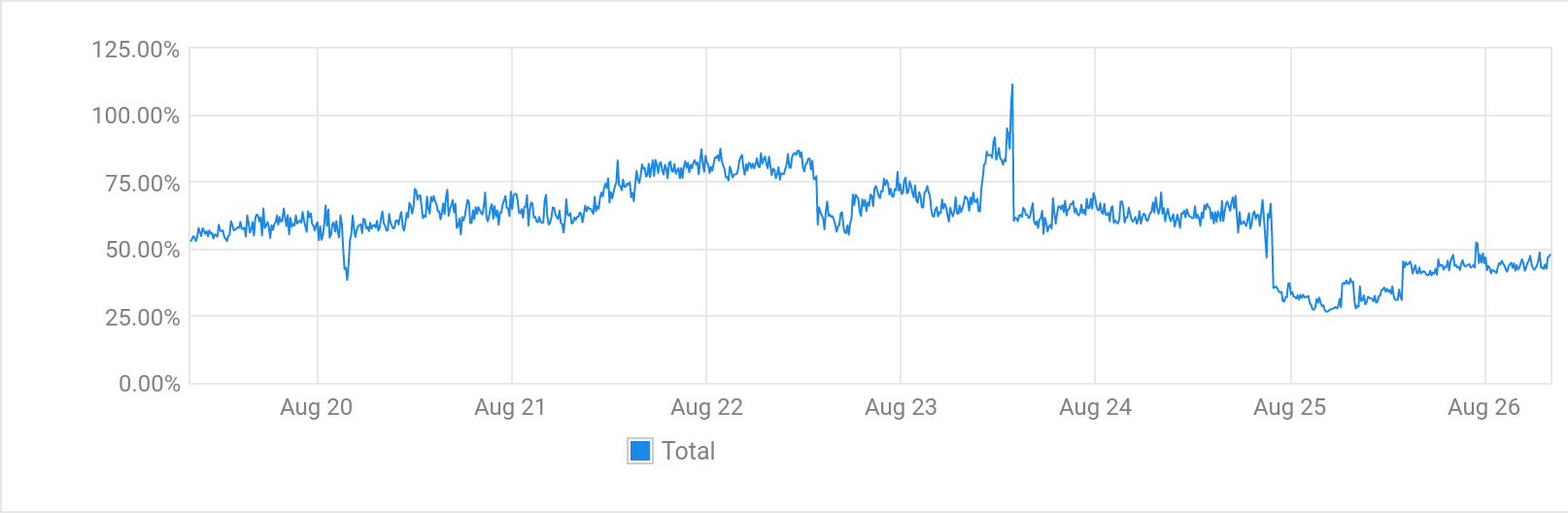

I concluded the best option for now was to block most traffic to the instance until I could work out what to do. Since Varnish fronts most of my web traffic I used it to filter requests and return a very basic 404 page for all but a handful of routes. A day later the impact of this change is obvious in the usage graphs.

After letting the changes sit overnight I was still seeing a lot of requests from user-agents that appear to be Chinese bots of some sort. They almost exactly matched the user-agents in this blog post: Blocking aggressive Chinese crawlers/scrapers/bots.

As a result I added some additional configuration to Varnish to block requests

from these user-agents, as they were clearly not honouring the robots.txt I

added:

sub vcl_recv {

if (req.http.user-agent ~ "(Mb2345Browser|LieBaoFast|OPPO A33|SemrushBot)") {

return (synth(403, "User agent blocked due to excessive traffic."));

}

# rest of config here

}

What Now?

I liked having the Nitter instance for sharing links but now I’m not sure how to run it in a way that only proxies the things I’m sharing. I don’t really want to be responsible for all of the content posted to Twitter flowing through my server. Perhaps there’s a project idea lurking there, or perhaps I just make my peace with linking to Twitter.